Arcane Diffusion

This is the fine-tuned Stable Diffusion model trained on images from the TV Show Arcane. Use the tokens arcane style in your prompts for the effect.

If you enjoy my work, please consider supporting me

🧨 Diffusers

This model can be used just like any other Stable Diffusion model. For more information, please have a look at the Stable Diffusion .

You can also export the model to ONNX , MPS and/or FLAX/JAX .

#!pip install diffusers transformers scipy torch

from diffusers import StableDiffusionPipeline

import torch

model_id = "nitrosocke/Arcane-Diffusion"

pipe = StableDiffusionPipeline.from_pretrained(model_id, torch_dtype=torch.float16)

pipe = pipe.to("cuda")

prompt = "arcane style, a magical princess with golden hair"

image = pipe(prompt).images[0]

image.save("./magical_princess.png")

Gradio & Colab

We also support a Gradio

Web UI and Colab with Diffusers to run fine-tuned Stable Diffusion models:

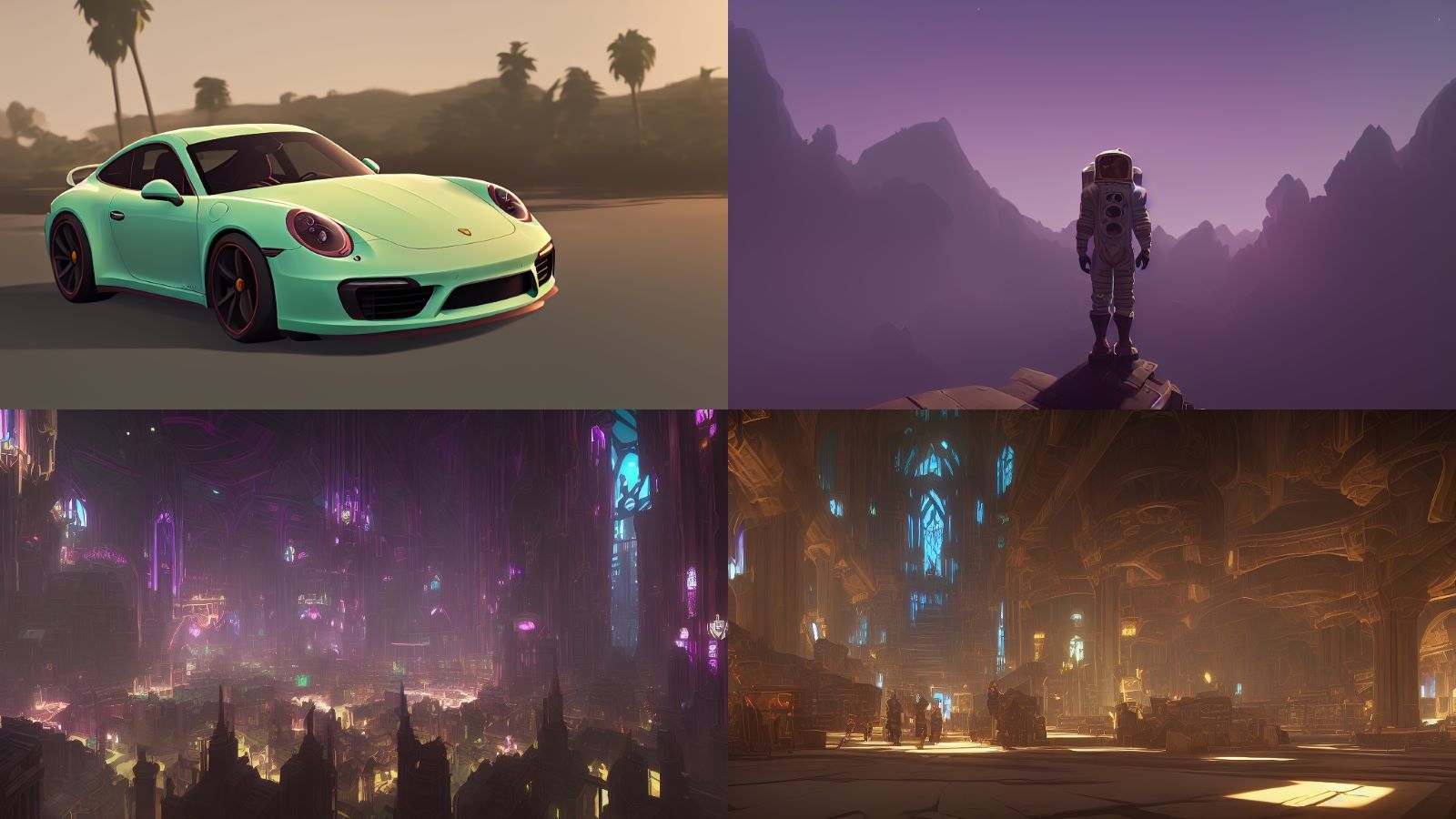

Sample images from v3:

Sample images from the model:

Sample images used for training:

Version 3 (arcane-diffusion-v3): This version uses the new train-text-encoder setting and improves the quality and edibility of the model immensely. Trained on 95 images from the show in 8000 steps.

Version 2 (arcane-diffusion-v2): This uses the diffusers based dreambooth training and prior-preservation loss is way more effective. The diffusers where then converted with a script to a ckpt file in order to work with automatics repo. Training was done with 5k steps for a direct comparison to v1 and results show that it needs more steps for a more prominent result. Version 3 will be tested with 11k steps.

Version 1 (arcane-diffusion-5k): This model was trained using Unfrozen Model Textual Inversion utilizing the Training with prior-preservation loss methods. There is still a slight shift towards the style, while not using the arcane token.

Follow AI Models on Google News

An easy & free way to support AI Models is to follow our google news feed! More followers will help us reach a wider audience!

Google News: AI Models